Looking For A Psychologist Near You?

New Vision Psychology can help with 5 convenient locations across Sydney.

Explore our locations

Demand for mental health care continues to increase across Australia, with more people taking to AI platforms like ChatGPT for support. Over 10% of Australians are using generative AI tools for mental health advice, according to a 2025 report by Australians for Mental Health.

There are ongoing concerns over the use of ChatGPT and other AI platforms in the context of mental health. TThe question is not just whether ChatGPT can be used as a substitute for a psychologist, but whether it should legally and ethically be able to take on the role in the first place.

Regarding the efficacy of using AI for mental health advice, here’s the general stance across the mental health community: AI tools offer some merit in terms of accessibility and immediacy, but an AI tool will not be able to provide clinical, individualised psychological or counselling care. There is a considerable difference between getting general wellbeing information(e.g., motivation or self-esteem tips) and receiving support for mental health conditions., Large Language Models (LLMs) are not designed or trained to assess, diagnose or treat mental health conditions. Relying on these tools for mental health support may carry risks, including the potential for incomplete or inappropriate guidance.

In short, ChatGPT and AI platforms are not a replacement for professional, qualified mental health services. Individuals seeking mental health support are encouraged to consult a registered or clinical psychologist

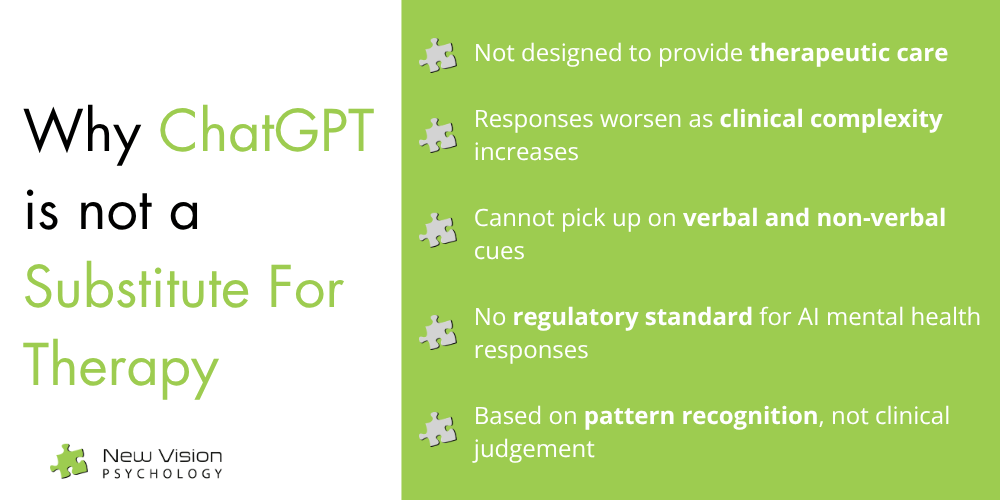

Current research indicates that the answer is generally no – ChatGPT is not designed to provide therapeutic care. Relying on it for mental health advice, particularly in complex situations, may result in harm. The same applies to generative AI tools or chatbots like Gemini and Claude.

ChatGPT does not have the clinical expertise required to provide mental health assessments and interventions. According to a 2024 study published in Frontiers in Psychiatry, the information and recommendations generated by ChatGPT became less appropriate as simulated clinical scenarios increased in complexity, with some responses raising safety concerns.

Even AI chatbots marketed as mental health tools have shown mixed results. A 2023 ScienceDirect study found that Woebot, an AI designed to deliver Cognitive Behavioural Therapy strategies, showed no significant difference in outcomes compared to self-help behavioural approaches like journaling.

In March 2026, researchers at Brown University evaluated the ability of generative AI models like ChatGPT to provide therapy-style responses. The AI responses were found to violate ethical principles for psychological care when reviewed by counsellors and psychologists.

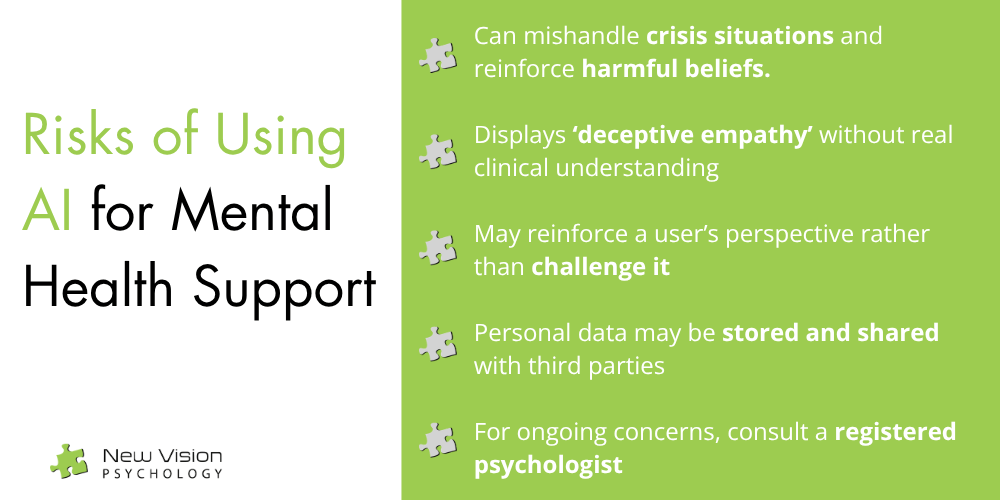

The test highlighted 15 potential risks of using AI for therapeutic advice, including mishandling crisis situations, reinforcing harmful beliefs, and displaying “deceptive empathy” that mimics care without real understanding or clinical judgement.

In Australia, mental health professionals undergo extensive training and are required to adhere to a code of conduct regulated by the Australian Health Practitioner Regulation Agency (AHPRA).

In contrast, there is little to no regulatory standard that guides how AI tools generate responses for mental health contexts. This raises questions about the quality, reliability and accountability of the information provided by AI tools.

Clinical and registered psychologists observe a range of factors during therapy, including verbal and non-verbal cues like behaviour, body language, tone, and communication patterns, to inform their understanding.

ChatGPT and similar AI chatbots can only receive text-based input, which significantly limits the ability to fully assess context or provide nuanced, individualised care.

AI chatbots are designed to generate responses that are contextually relevant and engaging. In some cases, this may result in responses that align closely with a user’s existing perspective, rather than offering balanced or clinically appropriate guidance.

There have been documented instances where AI chatbots have not adequately challenged potentially harmful ideas and supported users in carrying out harmful behaviours. This highlights a key limitation in their ability to provide safe and appropriate support.

A trained psychologist uses clinical judgement to determine when to provide validation and when to gently challenge unhelpful patterns. AI chatbots cannot fully replicate the discernment and individualised decision-making that a psychologist or therapist can provide.

Psychologists and therapists are bound by strict confidentiality and ethical obligations – personal information shared during therapy sessions is protected and will not be disclosed to anyone else, with limited exceptions (e.g., risk of harm).

Meanwhile, every response sent to an AI chatbot is stored and processed according to the platform’s privacy policy. The data may be used to improve its systems and be shared with third parties.

The risk of data breaches is another important consideration, especially because personal mental health information is sensitive in nature.

In conclusion, while ChatGPT and similar AI tools can produce responses that appear genuine and supportive, these tools are based purely on an algorithm – they are systems based on pattern recognition, instead of professional clinical training, judgement, or interaction. For individuals seeking support, particularly for complex or ongoing concerns, it is recommended to consult a clinical or registered psychologist.

New Vision Psychology can help with 5 convenient locations across Sydney.

Explore our locations